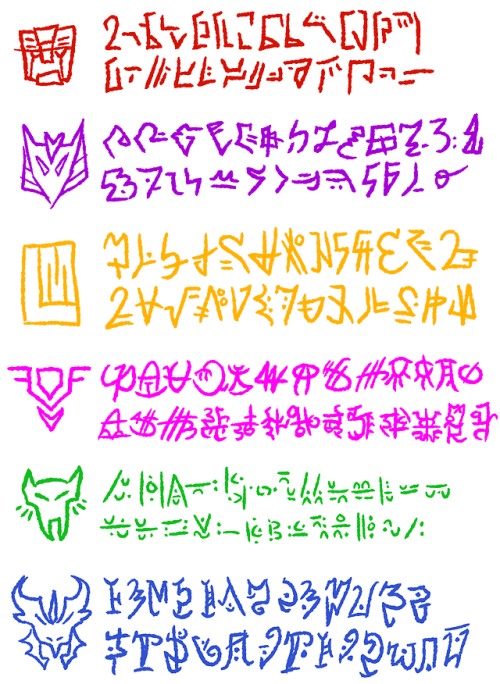

Cybertronian language translator

Positional EncodingĬompared to RNNs, Transformers are different in requiring positional encoding. From our input sentence of 10 German words, we get tensors of length 10 where each position is the embedding of the word. Note that this embedding mapping is per word based. Note that inside of the Transformer structure, the input encoding, which is by frequency indices, passes through the nn.Embedding layer to be converted into the actual nn.Transformer dimension. Uncommon words that appear less than 2 times in the dataset are denoted with the token. The vocabulary indexing is based on the frequency of words, though numbers 0 to 3 are reserved for special tokens: We use the spacy python package for vocabulary encoding. The Five P’s of successful chatbots Vocabulary

:no_upscale()/cdn.vox-cdn.com/uploads/chorus_asset/file/20865965/transformers_cybertronian_language.png)

Facebook acquires Kustomer: an end for chatbots businesses?Ĥ. The Messenger Rules for European Facebook Pages Are Changing. For our model, we train on an input of German sentences to output English sentences. For Transformers, the input sequence lengths are padded to fixed length for both German and English sentences in the pair, together with location based masks. Finally, the sorted pairs are loaded as batches. As the length of German and English sentence pairs can vary significantly, the sorting is by the sentences’ combined and individual lengths. To improve calculation efficiency, the dataset of translation pairs is sorted by length. It consists of 30k paired German and English sentences. This dataset is small enough to be trained in a short period of time, but big enough to show reasonable language relations. The Transformer translation process results in a feedback loop to predict the following word in the translation.įigure 2: An example showing how a Transformer translates from German to English (Image by Authors) Datasetįor the task of translation, we use the German-English `Multi30k` dataset from `torchtext`. The model itself expects the source German sentence and whatever the current translation has been inferred. For example, we start with the German sentence “Zwei junge personen fahren mit dem schlitten einen hügel hinunter.” The ground truth English translation is “Two young people are going down a hill on a slide.” Below, we show how the Transformer is used with some insight on the inner workings. Input Sequence and Embedding ModuleĪt the start, we have our input sequence.

Cybertronian language translator full#

Note that we’ll be obtaining words one-by-one from each forward pass during inference rather than receiving a translation of the full text all at once from a single inference. An input sequence is converted to a tensor where each of the Transformer’s outputs then goes through an unpictured “de-embedding” conversion process from embedding to the final output sequence. The data flow follows the diagram shown above. To start with, let’s talk about how data flows through the translation process.

Cybertronian language translator how to#

With an openly available database, we’ll be demonstrating our Colab implementation for how to translate between German and English using Pytorch and the Transformer model.įigure 1: The sequence of data flowing all the way from input to output (Image by Authors) In creating this tutorial, we based our work on two resources: the Pytorch RNN based language translator tutorial and a translator implementation by Andrew Peng. If you’re like us, relatively new to NLP but generally understand machine learning fundamentals, this tutorial may help you kick start understanding Transformers with real life examples by building an end-to-end German to English translator. With the Transformer’s parallelization ability and the utilization of modern computing power, these models are big and fast evolving, generative language models frequently draw media attention for their capabilities. However, Transformer models, like OpenAI’s Generative Pre-trained Transformer (GPT) and Google’s Bidirectional Encoder Representations from Transformers (BERT) models, have quickly replaced RNNs as the network architecture of choice for Natural Language Processing (NLP). Since it was introduced in 2017, the Transformer deep learning model has rapidly replaced the recurrent neural network (RNN) model as the model of choice in natural language processing tasks. If you’ve been using online translation services, you may have noticed that the translation quality has significantly improved in recent years. Mike Wang, John Inacay, and Wiley Wang (All authors contributed equally) Language Translation with Transformers in PyTorch